Blog

Blog-posts are sorted by the tags you see below. You can filter the listing by checking/unchecking individual tags. Doubleclick or Shift-click a tag to see only its entries. For more informations see: About the Blog.

Evvvveryone!

This is the final post on this blog. In our steady effort to transition to a new website, we're herby moving the blog to a shiny new place:

https://visualprogramming.net/blog

So please point your RSS reader to this new URL. Also, we're still hoping to bring most of the archived posts over to the new blog to have them all in one place again. So please bear with us...

Comments

Again, your comments on blog-posts are very much appreciated. Be it anonymously or preferably logged in with your element (matrix) chat user. How this works? We're using the amazing Cactus Comments for this.

Every blog-post essentially has a corresponding chat room in the matrix and thus you can also comment and track notifications there as you prefer. For an overview of all blog-comment rooms, join this space:

Guest Posts

We do accept guest posts! If you want to announce a vvvv-related event, technology or project, please go ahead and send us a pull-request to the repo of the website.

Specifically, blog-posts go here:

https://github.com/vvvv/visualprogramming.net/tree/master/content/blog

Formatting is in markdown and you'd have a look at some of the other blog-posts to see how it all goes. Looking forward to your contributions!

Previously on vvvv: vvvvhat happened in January 2022

So let's see,

vvvv gamma 2021.4.7 is out which sounds like a small update but really it is quite a biggy: Now including the polished audio library: VL.Audio. With no more need for anything extra to install, audio analysis, synthesis, playback, recording.. all at your fingertips now.

We had the 17th vvvv online meetup once again expertly moderated by baxtan. If you want to see what others are doing with your favorite toolkit, this is the place to watch! Save the date for the next edition on March 22nd!

We're now about halfway through the Mastering vvvv for teaching course and the positive side effect it has for all of us, is that it brings us to work on the graybook. Revising some content and adding some new. Here are some notable new chapters:

And much more to come...

Contributions

We got some new ones:

- Kinect2 FUSE Utils by lasal

- VL.Alembic by torinos

- VL.Preview.HDE by gregsn

- VL.SimpleHTTP by sebescudie

and received updates to the following:

- Demolition Media Hap Player

- Gamma Launcher

- VL.Fuse

- VL.HapPlayer

- VL.IO.M2MQTT

- VL.Audio.GPL

- VL.OpenEXR

Gallery

- #madewithvvvv by various patchers

Jobs

- Always keep an eye on the vvvv job board

- There are often some more on The Interactive & Immersive Job Board and dasauge.de

- If you need a vvvv specialist or are one yourself, check out this listing of vvvv specialists available for hire

That was it for February. Anything to add? Please do so in the comments!

Who sunep

When Sat, Mar 5th 2022 - 21:00 until Sun, Mar 6th 2022 - 02:00

Where Teater Momentum, Ny vestergade 18, 5000, Odense C, Denmark

Stoked to be in this lineup from 00:00 to 00:30 on saturday with my very first live Techno AV set.

Come by if you are in Odense

Event on Facebook

This has been a long time coming!

We've hoped to have this one out earlier, but finally, we can release it into your caring hands: The best vvvv gamma ever (so far). With tons of bug fixes, improvements, and new features. And without further ado, you can divvvve right into it:

Bugfix release

- 2021.4.7 on February 26, 2022

- 2021.4.6 on January 31, 2022

- 2021.4.5 on January 18, 2022

- 2021.4.4 on January 12, 2022

- 2021.4.3 on December 22, 2021

- 2021.4.2 on December 7, 2021

- 2021.4.1 on December 6, 2021

Here is to give you an overview of the most notable changes:

UI/UX

Improved patching performance

Part of the magic of vvvv gamma is, that it has the advantages of a visual live programming environment combined with the advantages of a compiled language. Getting those two to work together smoothly is one hard nut to crack. In this release, we were able to improve the patching performance with larger projects by introducing a new compilation and hotswap strategy. For details, see this discussion.

Hamburger menu

The new hamburger menu in the top right corner of the editor gives you quick access to Settings, Themes, Licensing information and the About page.

Editor extensions

Are you missing a feature in the vvvv editor and don't want to wait for us to build it? Editor extensions allow you to essentially build plugins for vvvv itself. Available examples are a desktop color picker, a TUIO simulator and monitor and a Spout monitor. Read more.

But the best thing about extensions is, that they are just patches. So it takes just a few clicks to create your own. Read more.

Libraries

Updates to Stride 3d rendering

- TextureFX: We added a vast collection of easy to use nodes for applying visual effects to textures

- We added Pipet and MeshSplit nodes and fully reworked the Texture- and Buffer-creation nodes

- Latest Stride comes with two new PostFX: Fog and Outline

- We added a ShaderFX node factory that allows to easily write composable shader snippets

- Materials can now be extended with custom shaders

FUSE - visual gpu patching

This release paves the way for the almighty new FUSE library, developed by dottore, everyoneishappy and texone. It allows you to use your GPU for things that typically require writing shaders. FUSE gives you access to procedural noise, signed distance field rendering, customizable particle systems, vector fields, fluid simulations and more, without having to write shader programs! Watch this video to see how to get started with FUSE.

Video input and playback

We added stable support for the effortless playback of a wide range of video formats for both Skia and Stride. In addition, we added ImagePlayer nodes for the playback of image sequences. Read more.

The ImagePlayers can also easily be synced over the network. Read more.

Updates to Skia 2d rendering

- 2d rendering is now fully GPU accelerated, which greatly improves performance in many scenarios

- SkiaRenderer and SkiaTexture nodes now also work on AMD GPUs

Language

- The "This" node can now be used in constructors as well as in generic patches. For details, see this dicussion

- You can now use explicit type parameters. For details, see this discussion

- You can now patch a stateful region with BorderControlPoints! See the "ManageProcess" node for an example

And these were only the highlights. For all the details, please see the changelog.

Licensing

The release of a new version is always a good moment to make sure you still have a valid license for commercial use. To check, log into your account here on vvvv.org and then view your vvvv gamma licenses.

In case, you simply buy a license the moment you start working on a commercial project. Don't forget that we also have monthly options!

What next? Expect the occasional 2021.4.x bug-fix release while we're starting work on the 2021.5 branch as per the updated roadmap.

Good patch!

When Tue, Feb 22nd 2022 - 20:00 until Tue, Feb 22nd 2022 - 22:00

We're meeting up on Febraury 22nd, 8pm CET to catch up and get insights into what everyone is patching on. How will this work? as always. So please fill up your bucket of popcorn and invite all your vvvvriends and vvvvamily to join us!

Want to share your work?

Please do! Anything more or less related to vvvv, yourself and your projects. Share some thoughts, share your funny fails. Or just join and vibe with us...

Join Zoom Meeting

https://us02web.zoom.us/j/83928358860?pwd=NisyWTVzVjB1YWJLdllLSGcyWTVnQT09

Please write your name + vvvv so we can let you in the call.

Any questions? Get in touch via meetup@vvvv.org. See you there!

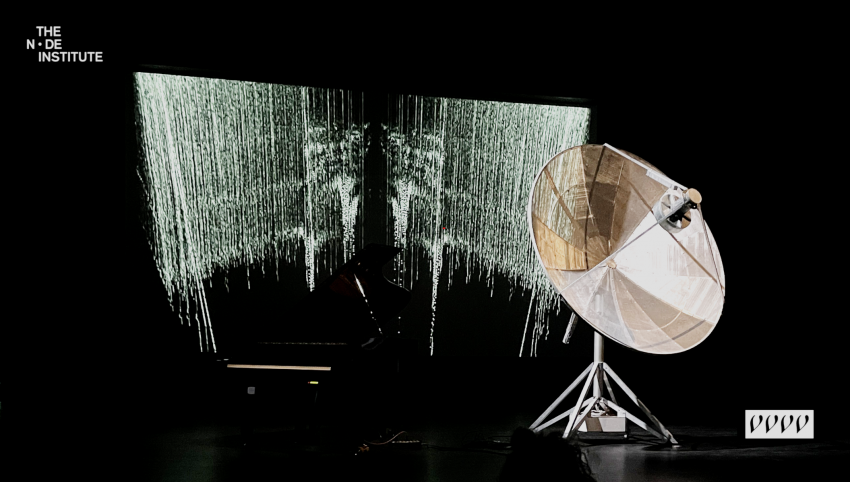

>> Image credits: Quadrature.co

Previously on vvvv: vvvvhat happened in December 2021

And the patch goes on:

We've released not one, not two, but 3 bugfix releases for vvvv gamma in January. Read all about them in The ChangeLog.

We've also been hard at work on making VL.Audio into a first stable release that will eventually ship with vvvv directly, ie. no more extra install only to play with audio. Normal. Read all about it in the post with the captivating title Breaking - VL.Audio approaching stable.

The Mastering vvvv for teaching course has started. We got a whopping 42 applications which unfortunately we couldn't accommodate all. We had a tough time choosing but eventually settled on a nice blend of 20 people who are joining us from 9 different countries. Ah the joys of the modern age. If you were not chosen this time, we're hoping to have a new offer for you soon!

Oh and in case you missed it, you can still rewatch the 16th vvvv online meetup.

Contributions

We got some new ones:

and received updates to the following:

Gallery

- Illuminarium 2021 - 360 Pinball by colorsound

- Contact by Emanuel Gollob

- #madewithvvvv by various patchers

Jobs

- Always keep an eye on the vvvv job board

- There are often some more on The Interactive & Immersive Job Board and dasauge.de

- If you need a vvvv specialist or are one yourself, check out this listing of vvvv specialists available for hire

That was it for January. Anything to add? Please do so in the comments!

Dear audiophiles!

Here is to announce some long-overdue work on the VL.Audio pack with the goal to finally release a stable version of it. There are still some pending todos, but the main things are done. So in its latest previews, please find the following partly breaking changes:

Driver and Timing Configuration

The singleton AudioEngine node is gone and replaced by 2 simpler singleton nodes

- DriverSettings

- TimingSettings

But the idea is to mostly only use those when exporting applications. Because usually, you'd now simply use the new Audio Configuration extension (Alt+C). The UI for the extension is still missing at this point, but you get the idea. Meanwhile, you can manually modify \AppData\vvvv\gamma\VL.Audio.Configuration.xml (requires a restart of vvvv).

A third alternative is to use the new SettingsFromFile node that allows you to specify such a configuration.xml that you may want to check into a git-repo with your project.

Still, to get any audio out, you'll need either the dedicated ASIO driver of your sound device or one of the generic ASIO drivers installed.

Buffer nodes

A new set of nodes allows you to record/play and save/load audio, using buffers. For now these nodes are still marked with the experimental aspect, because we may still apply breaking changes, but the idea is ready for testing. Create a Buffer node and then work it with the following:

- BufferRecorder

- BufferPlayer

- BufferWriter

- BufferReader

- WavReader (Buffer)

- WavWriter (Buffer)

- WaveForm (Buffer)

Misc

- WaveForm: returns a spread of floats you can use to draw a WaveForm

- WavWriter: for recording live audio to a .wav file on disk

- StereoMixer, MatrixMixer

- ValueSequence

Fixed issues

- Creating and disposing VL.Audio player leaks memory?

- VL.Audio Player - Break playback by hovering any FileStreamSignal link or pin

- Record audio in gamma

- AudioEngine - Dynamic enum not set on startup

- Gamma crashes when changing samplerate

So please give the latest preview a spin and report your findings!

We're meeting up on January 25th, 8pm CET to catch up and get insights into what everyone is patching on. How will this work? Something like the ususal :). So please fill up your bucket of popcorn and invite all your vvvvriends and vvvvamily to join us!

Want to share your work?

Please do! Anything more or less related to vvvv, yourself and your projects. Share some thoughts, share your funny fails. Or just ask some questions...

No sign-up, no line-up! We'll just have this an open call that anyone can join. Surprise!

Or watch the stream on youtube

Any questions? Get in touch via meetup@vvvv.org. See you there!

Previously on vvvv: vvvvhat happened in November 2021

Happy new evvvveryone!

It's that time of the year again where we're throwing out the old one and are unconditionally happy for yet another one to come. That's the spirit! So while you mentally prepare for the upcoming meetup on January 25th, you have a chance to rewatch the 15th vvvv online meetup, the last one from 2021.

Last thing in December for us was releasing another dot-release for the 2021.4 series of vvvv gamma. Changes and download for it can be found in the usual place.

And here's to all educators among you:

With vvvv gamma finally being in proper shape, we're starting an educational offensive. First off, we're running a super-spreader event with a course tailored to educators. For full details and application, please see:

Course: Mastering vvvv for teaching

Contributions

We got a new one:

and received updates to the following:

And there is a growing number of video tutorials in chinese by lavalse

Gallery

- Perpetual Myth by lasal

- #madewithvvvv by various patchers

Jobs

- Always keep an eye on the vvvv job board

- There are often some more on dasauge.de

- If you need a vvvv specialist or are one yourself, check out this listing of vvvv specialists available for hire

That was it for December. Anything to add? Please do so in the comments!

When Mon, Jan 31st 2022 - 18:00 until Thu, Mar 24th 2022 - 21:00

Dear educators!

With the all-new vvvv gamma finally having all the necessary bits together for being a serious creative coding environment, we believe it is also a premium tool for education. In case you need to argue:

Reasons to use vvvv in education

- It is free for educational use without any restrictions

- It is quick and easy to install, with no copy protection or need for registration

- It comes with extensive documentation, accessible right from an integrated Help Browser

- It connects to most popular protocols and devices

- It allows to teach programming concepts like object oriented programming and dataflow in a visual way

- While being a visual language, it can easily be extended via C# and the use of .NET Nugets

- Its libraries are open-source, thus can be inspected and learned from

Becoming a vvvv teacher

If you want to help us spread vvvv by teaching (with) it, we want to make sure you don't feel lost in its universe. "Mastering vvvv for teaching" is an intensive 8 week (16 sessions) live online course with the goal of making you a confident vvvv teacher.

If you're curious, please head over to The NODE Institute for a detailed description of the course a form to apply:

Application deadline: January 16th, 2022

Course period: January 31st - March 24th

Any questions? Get in touch!

Hope to see you there!

P.S.: If you're interested in such a course but rather for commercial applications than for education, here is a little heads-up: If everything goes according to plan, we'll be running a similar course in summer 2022 with a broader focus.

anonymous user login

Shoutbox

~6d ago

~6d ago

~7d ago

~20d ago

~1mth ago

~1mth ago

~1mth ago

~1mth ago

~1mth ago

~1mth ago